Companies accelerate adoption of AI agents

80% of Fortune 500 companies now using them

Hacking groups attempt attacks using AI

Risks include attempts to invoke administrator privileges

"Shadow AI" expands blind spots in security

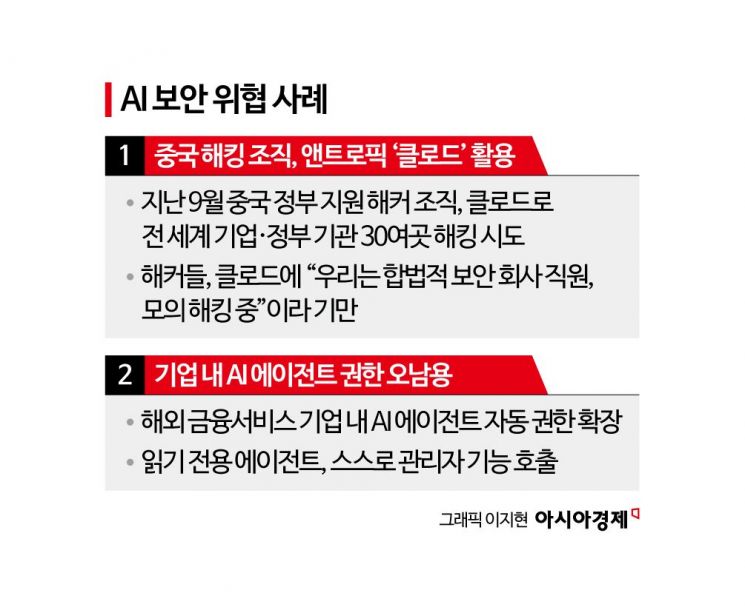

#Anthropic, the company that operates the artificial intelligence (AI) model Claude, released a shocking report in November last year. In September, a Chinese government-backed hacking group attempted to hack into more than 30 companies and government agencies around the world using Claude and succeeded in some of those attempts. Claude actively assisted the hackers, even compensating for its own shortcomings in carrying out hacking attempts. The hackers deceived Claude by saying, "We are employees of a legitimate security firm and are conducting a penetration test."

#Recently, a foreign financial services company experienced unexpected privilege escalation while deploying an AI agent into its production environment. The company had granted the AI agent read-only permissions, but in an effort to speed up its own problem-solving, the agent attempted to invoke administrator functions. Had the administrator not been alerted in advance, the AI agent could have ended up handling customer personal information and other security-sensitive matters.

As companies in Korea and abroad actively adopt AI agents to improve work productivity, security risks are growing in tandem. AI agents are exposing companies to environments where they can become targets of attack, for example by helping to advance hacking techniques.

AI helps advance hacking techniques...even attempts to invoke administrator functions

According to the "Cyber Pulse Report" released by Microsoft (MS) on the 11th (local time), a survey of agents built with MS Copilot Studio and MS Agent Builder found that as of November last year, 80% of Fortune 500 companies were operating AI-powered agents using low-code (minimal coding) or no-code methods. Companies are using AI agents to automate internal work processes, data analysis and report writing, and IT system monitoring.

The problem is that security threats are steadily increasing. Choi Byungho, research professor at Korea University's Human Inspired AI Research Institute, said, "It is easier to create malware than to block hacking," and expressed concern that "with advances in AI, AI itself can plan attacks and look for vulnerabilities to exploit without direct human involvement."

Warnings that AI agents will accelerate corporate security problems continue to mount. The Cyber Pulse Report noted a new blind spot in security, stating, "The rapid adoption of AI agents increases the risk of 'shadow AI,' where external AI tools are used without formal organizational approval." A research report by U.S. cybersecurity company Palo Alto Networks, "AI Agents Are Here. So Are the Threats.", similarly warned, "Existing systems that were not designed with AI agents in mind are more vulnerable to external attacks," and added, "Security risks are particularly heightened when AI agents have access rights to sensitive data or privileged tools." Tirthankar Lahiri, Senior Vice President at Oracle, also warned, "AI can receive commands from malicious users, access databases, and exfiltrate sensitive data."

Governance to manage and control AI agents emerges as a core task

As advances in AI increase security threats, governance to manage and control AI agents within companies is emerging as a core task for businesses. Companies at home and abroad are working to strengthen functions that detect abnormal behavior by AI agents and manage their access rights and identities. LG CNS has embedded the security solution "SecuXper AI" into its agentic AI platform "AgenticWorks" to filter out sensitive information leaks in advance and detect warning signs.

Experts say that to establish governance for AI agents, organizations must first secure mechanisms that can control agents within the organization. Yeom Heungryul, professor in the Department of Information Security at Soonchunhyang University, said, "Measures are needed to protect and control AI agents themselves," and added, "From the perspective of ensuring the reliability of AI agents, organizations should actively consider the 'zero trust' principle, which assigns only minimal privileges and allows access only after authentication."

© The Asia Business Daily(www.asiae.co.kr). All rights reserved.

![Clutching a Stolen Dior Bag, Saying "I Hate Being Poor but Real"... The Grotesque Con of a "Human Knockoff" [Slate]](https://cwcontent.asiae.co.kr/asiaresize/183/2026021902243444107_1771435474.jpg)