First New Chip in Three Years Since the Unveiling of 'Maia 100' in 2023

Built on TSMC's 3nm Process, Featuring HBM3e Memory

Superior Inference Efficiency: "30% Higher Performance per Dollar"

Proprietary SDK Released, Targeting NVIDIA CUDA

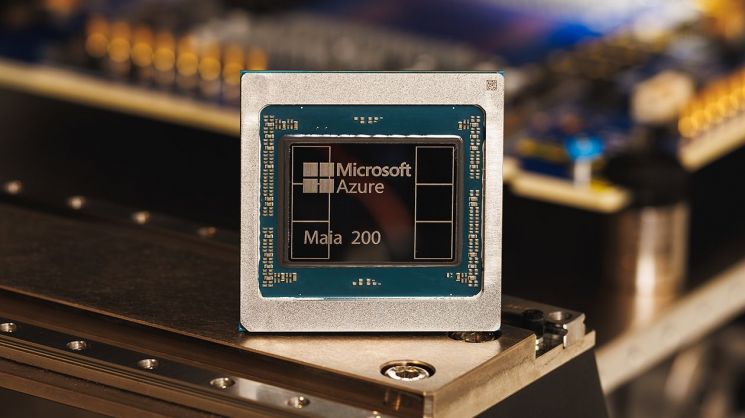

Microsoft (MS) announced on January 27 that it has unveiled the AI accelerator 'Maia 200,' which enhances the efficiency of artificial intelligence (AI) inference tasks. The Maia 200 is set to support the operation of AI inference models within MS's cloud platform, Azure.

The Maia 200 is built on TSMC's 3-nanometer (nm) process and features an architecture optimized for high-performance AI inference. In particular, it integrates a 216GB HBM3e memory system with a bandwidth of 7 terabytes (TB) per second, native FP8/FP4 tensor cores, and a data movement engine. According to MS, this enables inference performance optimized for large-scale models.

Microsoft announced on the 27th that it has unveiled the AI accelerator "Maia 200," which improves the efficiency of artificial intelligence (AI) inference tasks. Product image of Maia 200. Provided by Microsoft Korea.

Microsoft announced on the 27th that it has unveiled the AI accelerator "Maia 200," which improves the efficiency of artificial intelligence (AI) inference tasks. Product image of Maia 200. Provided by Microsoft Korea.

In terms of actual computational performance, it outperformed competing AI accelerators. The Maia 200 achieved three times higher throughput than Amazon Web Services (AWS)'s in-house AI chip, Amazon Trainium (3rd generation), at 4-bit precision (FP4), and surpassed Google's 7th generation Tensor Processing Unit (TPU) at 8-bit precision (FP8).

It also improved computational efficiency. Satya Nadella, CEO of MS, explained, "This product, designed for industry-leading inference efficiency, delivers 30% higher performance per dollar compared to existing systems."

The Maia 200 is equipped with over 140 billion transistors for large-scale AI computations. MS explained that this supports the operation of large-scale models and provides enough performance headroom to run next-generation models as well. Additionally, to resolve data supply bottlenecks, the memory subsystem was completely redesigned to optimize token throughput.

The process of installing and operating Maia 200 in data centers has also been optimized. By early verification and integration of the backend network and liquid cooling system, the time from Maia 200’s arrival to deployment in data centers has been reduced to less than half of previous durations.

The Maia 200 supports a variety of models, including OpenAI's latest model, GPT-5.2. MS plans to use the Maia 200 for improving its own models and for reinforcement learning. It will play a key role in accelerating the generation and filtering of high-quality domain data for subsequent training.

The Maia 200 has already been installed at MS's data center in Iowa, USA. It will also be installed at the data center in Phoenix, Arizona, to be provided to Azure cloud customers.

This is the first time in over two years that MS has released its own chip since the unveiling of the 'Maia 100' in November 2023. However, the Maia 100 was deployed internally within MS Azure Cloud and was not widely available, thus having limited market impact. At that time, MS was considered to be lagging behind competitors such as Amazon and Google in the custom chip market.

Industry experts view the Maia 200 as effectively MS's first commercialized chip. MS is expected to use Maia 200 to reduce its reliance on NVIDIA's graphics processing units (GPUs).

Meanwhile, MS has also released a preview of the 'Maia 200 Software Development Kit (SDK)' to support model utilization. This SDK is designed to be compatible with NVIDIA's CUDA software and is expected to expand the Maia 200 software ecosystem. CUDA is a toolkit for AI developers from NVIDIA, enabling the use of GPUs for AI computations. NVIDIA has built its own AI development ecosystem through CUDA.

© The Asia Business Daily(www.asiae.co.kr). All rights reserved.