Comprehensive Development Guidebook Covering Legal, Ethical, and Technical Requirements

Produced and Distributed

One-Stop Support Plan Established... Public Learning Data Production

[Asia Economy Reporter Minyoung Cha] In January, the startup Scatter Lab ambitiously launched the artificial intelligence (AI) chatbot "Iruda." Iruda, which gained popularity among people in their teens and twenties with the catchphrase "Your first AI friend," was shut down after just two weeks of service due to various controversies, culminating in allegations of personal information leakage. This was because Scatter Lab collected KakaoTalk conversations without user consent during the process of training Iruda. Despite the rapid advancement of AI technology, insufficient social discussions and ambiguous ethical standards have posed challenges for the government as well.

Following the "Iruda incident," discussions on AI reliability gained momentum, prompting the government to prepare concrete action plans. Given that AI technology is still in its early stages, the focus was primarily placed on voluntary regulation by the private sector.

Strategy for Realizing Trustworthy Artificial Intelligence

On the 13th, the Ministry of Science and ICT announced the "Strategy for Realizing Trustworthy Artificial Intelligence" at the 22nd plenary meeting of the Presidential Committee on the Fourth Industrial Revolution. This strategy aims to place humans at the center of AI development.

This strategy is a follow-up measure that concretizes the implementation plan of the "AI Ethics Guidelines" announced in December last year. It establishes a support system to help the private sector autonomously secure trustworthiness and includes support measures for startups lacking financial and technological resources.

The existing AI Ethics Guidelines mention three major principles that all members of society must observe for "human-centered AI": human dignity, social public good, and the purposiveness of technology. It also includes ten requirements such as accountability, safety, and transparency.

The Ministry of Science and ICT presented the vision of "AI that anyone can trust, AI that everyone can enjoy." Through three major strategies covering technology, systems, and ethics, and ten implementation tasks, the plan will be gradually promoted until 2025. The goals are to rank fifth globally in responsible AI utilization, tenth in trustworthy society, and third in safe cyber nation by 2025.

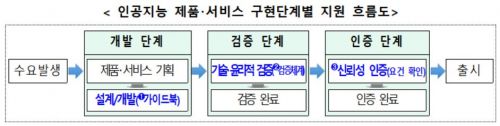

Specifically, a trust assurance system will be established for each stage of AI product and service implementation. Depending on the stage at which AI products and services are developed in the private sector, trust assurance standards and methodologies that companies, developers, and third parties can refer to for implementing trustworthiness will be presented. Detailed development guidebooks will be produced and distributed.

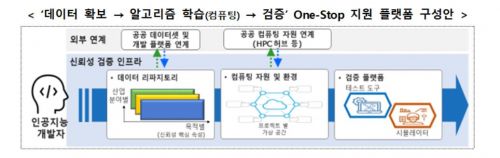

Additionally, to support the private sector in securing AI trustworthiness, an integrated platform will be operated to assist from data acquisition to algorithm training and verification. The "AI Hub" platform, which supports training data and computing resources, will analyze trust attribute levels according to the verification system. It will also support testing in environments similar to real-world conditions.

Government Establishes Standards for Trust Assurance

Development of core technologies to enhance AI explainability, fairness, and robustness will also be promoted. Budgets allocated for technology development over the next five years include 45 billion KRW for explainability and 20 billion KRW for fairness, while technology development for robustness is currently in the planning stage. Kim Kyung-man, head of the AI Policy Division at the Ministry of Science and ICT, explained, "A characteristic of AI technology is that the reasons behind decisions are often unknown, but the trend is moving toward making even that explainable."

The reliability of training data will also be improved. Standard criteria such as trust assurance verification indicators that both the public and private sectors must commonly follow during the AI training data production process will be established. Trust assurance for high-risk AI will also be promoted. AI risks will be required to be disclosed in advance, and after disclosure, users will be able to "refuse use," "request explanations of results," and "file objections." For example, objections will be allowed in AI recruitment screening processes.

Conducting Social Impact Assessments... Ethics Education

AI impact assessments will also be conducted. To comprehensively and systematically analyze and respond to the effects on all aspects of citizens' lives, social impact assessments stipulated in Article 56 of the "Basic Act on Intelligent Informatization" will be introduced.

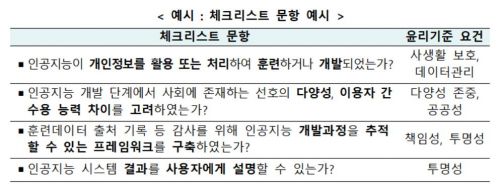

AI ethics education across society will be strengthened in coordination with related ministries. A general framework for AI ethics education will be prepared, and checklists enabling researchers, developers, and users to self-assess ethical compliance in their work and daily lives will be developed and distributed.

Choi Ki-young, Minister of Science and ICT, stated, "The recent chatbot incident has provided our society with many challenges on how to deal with AI reliability. The Ministry of Science and ICT will clarify AI trust assurance standards so that companies and researchers do not experience confusion during AI product and service development, and so that the public does not suffer damages."

He added, "We plan to steadily implement this strategy to realize a human-centered AI powerhouse, including preparing support measures so that small and medium-sized enterprises lacking technological and financial resources can comply with trustworthiness standards without difficulty."

© The Asia Business Daily(www.asiae.co.kr). All rights reserved.

![Clutching a Stolen Dior Bag, Saying "I Hate Being Poor but Real"... The Grotesque Con of a "Human Knockoff" [Slate]](https://cwcontent.asiae.co.kr/asiaresize/183/2026021902243444107_1771435474.jpg)