Stanford University Releases 'AI Index Report 2025'

GPT-4 Achieves 16 Percentage Points Higher Diagnostic Accuracy Than Human Doctors

A report has emerged showing that artificial intelligence (AI) skills have surpassed humans in the field of medical diagnosis. OpenAI's latest AI model, GPT-4, is said to outperform human doctors. This has led to evaluations that the emergence of an 'AI doctor' is within sight.

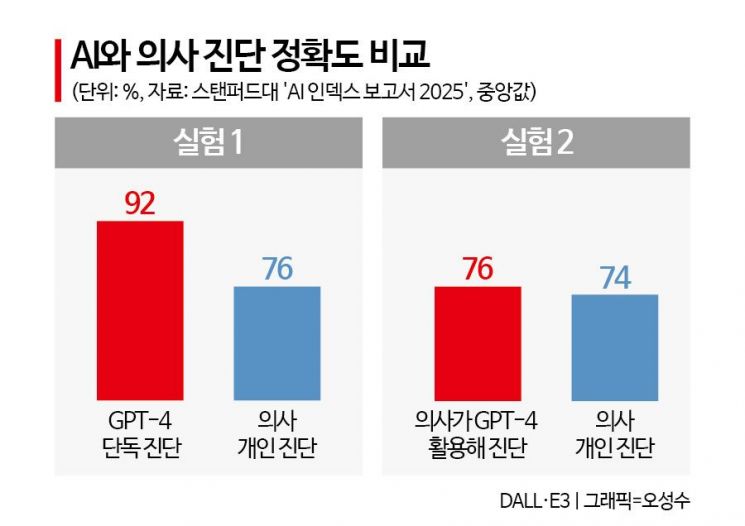

According to the 'AI Index 2025' report released on the 8th (local time) by the Human-Centered Artificial Intelligence Institute (HAI) at Stanford University in the United States, GPT-4 showed 16 percentage points higher accuracy than human doctors in diagnostic tests based on clinical cases. The report stated, "Overall, GPT-4's standalone diagnostic performance was the highest and the results were more consistent." It added, "On the other hand, standalone human doctor diagnosis showed lower performance," and "However, when human doctors collaborated with AI, the performance varied greatly depending on how it was utilized."

The AI Index 2025 report's AI versus human doctor diagnostic test experiment was conducted by providing GPT-4 and 50 U.S. clinicians (26 specialists and 24 residents) with six difficult patient cases to diagnose. Then, the diagnostic performances of 'GPT-4 alone,' 'human doctors collaborating with GPT-4,' and 'human doctors alone' were compared. The first experiment compared 'GPT-4 versus human doctors,' and the second experiment examined 'human doctors collaborating with GPT-4 versus human doctors alone' to assess diagnostic accuracy.

As a result, the median accuracy in the group diagnosed by GPT-4 (92%) was 16 percentage points higher than the group diagnosed solely by human doctors (76%). The median refers to the value exactly in the middle when data are arranged in order. Also, the median accuracy of the group of doctors collaborating with GPT-4 (76%) was only 2 percentage points higher than the group diagnosing alone (74%), which was found to be insignificant. Two internists who did not participate directly in the experiment independently evaluated the accuracy based on predetermined criteria. They graded the diagnoses without knowing who made each diagnosis.

This report's evaluation is meaningful in that it shows the changing status of AI in the medical field. AI is being widely adopted not only in robotic surgery and medical data analysis but also in AI-based cancer screening solutions. However, it has remained in the area of assisting doctors' judgment.

As the AI Index, considered the most authoritative AI white paper worldwide, released an analysis showing that generative AI models like GPT-4 diagnose better than doctors, there are prospects that the day when AI doctors are commonly seen in hospitals is not far off.

The report stated, "These experimental results indicate that overall, GPT-4's diagnostic performance is the highest and most consistent," and "Even when AI collaborates with doctors, the outcomes vary depending on the individual doctor's judgment style and utilization ability." It added, "Recent studies have shown that AI outperforms medical staff in areas such as cancer detection and identifying critically ill patients," and "The scope of AI utilization is expanding beyond simple diagnosis to more complex clinical judgment areas."

Along with this, GPT-4 recorded an accuracy of 96.0% in the 'MedQA' benchmark test, a representative standard for measuring clinical knowledge performance, as of last year. This figure rose by a remarkable 28.4 percentage points compared to 67.6% in 2022. MedQA is a test based on medical questions at the level of the U.S. medical licensing examination and is used to evaluate AI's clinical knowledge level.

The report added, "There are research results showing that collaboration between AI and doctors can produce the best outcomes, making this field an important research topic in the future," but also noted, "There are concerns about the risks inherent in AI systems themselves, such as the 'hallucination' problem generating false information and unpredictable errors, raising issues of reliability and safety. Therefore, policy preparations considering these risk factors are necessary."

As AI's diagnostic performance rapidly improves in the medical field, discussions about the future of medical professionals are continuing in South Korea as well. The Bank of Korea's report 'AI and the Korean Economy,' released in February, predicted, "AI is likely not simply to replace human labor but to complement human judgment in high-risk fields such as healthcare," and "In particular, AI development has the potential to improve the quality of medical services."

© The Asia Business Daily(www.asiae.co.kr). All rights reserved.