Kim Myungjoo, Director of the AI Safety Research Institute, Interview

Analyzing AI Risks... Collaboration with Companies

Attending International Conferences... Sharing Policies of Each Country

The Artificial Intelligence (AI) Safety Research Institute is establishing a ‘Risk Management System.’ This marks the first mission of the AI Safety Research Institute, which the government set up last November to enhance AI safety. The system aims to identify, analyze, and develop countermeasures for hidden risks in AI, with participation from related industries. It is expected to contribute to alleviating public anxiety and concerns about AI.

Kim Myung-joo, Director of the AI Safety Research Institute, is posing ahead of an interview on the 9th at the Global R&D Center in Pangyo, Seongnam, Gyeonggi Province. Photo by Kang Jin-hyung

Kim Myung-joo, Director of the AI Safety Research Institute, is posing ahead of an interview on the 9th at the Global R&D Center in Pangyo, Seongnam, Gyeonggi Province. Photo by Kang Jin-hyung

Kim Myung-joo, head of the AI Safety Research Institute, recently stated in an interview with Asia Economy, "We will build a risk management system," adding, "This year, we will begin this work together with AI companies such as LG AI Research, Naver, and Kakao." Kim explained, "Even AI companies themselves do not fully understand the hidden dangers within AI. The AI risk management system is a tool to detect, analyze, and devise measures to mitigate these hidden risks."

The AI Safety Research Institute was established under the leadership of the Ministry of Science and ICT, coinciding with the 'AI Seoul Summit' held in May last year. At that time, President Yoon Seok-yeol confirmed that AI safety is a core element for responsible AI innovation and expressed his commitment to joining the global AI safety network by establishing the AI Safety Research Institute.

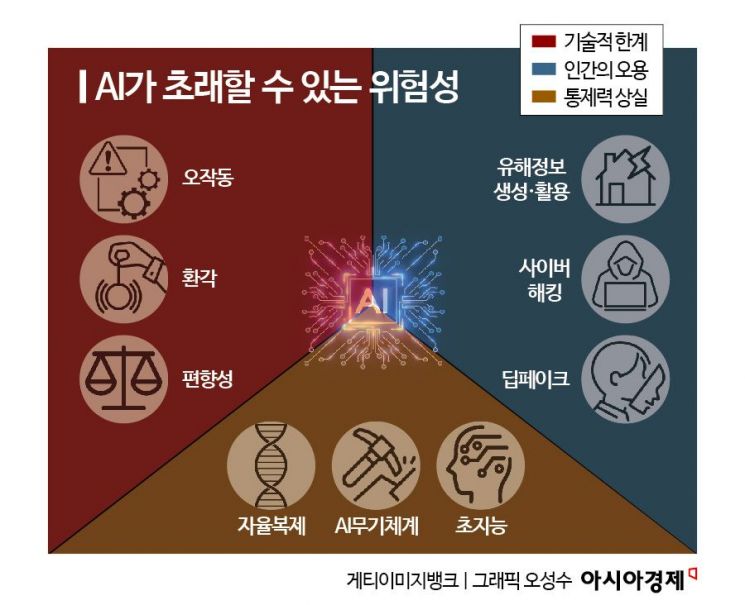

Depending on how AI is utilized, it can become a weapon to enhance national competitiveness or a threat that could collapse social systems. Kim explained, "If someone with no hacking skills can create malware using AI services, it poses a cybersecurity threat. If AI chatbots provide answers containing sociocultural biases based on the language of the questions, it could lead to international disputes." The development of Artificial General Intelligence (AGI), which surpasses human intelligence, also increases the risk of losing control.

"We will conduct AI safety evaluations... results will remain confidential"

In Western countries, AI safety evaluations are being conducted based on various standards, leading to calls for international standardization of these assessments. The France-based nonprofit organization SaferAI recently published results evaluating the risk management capabilities of six AI companies. Anthropic scored the highest with 2.22 out of 5 points, followed by OpenAI with 1.61. SaferAI concluded that these companies generally have weak AI risk management capabilities and require significant improvement.

Our AI Safety Research Institute also plans to conduct safety evaluations of various AI services. However, the evaluation results will not be disclosed externally. Kim stated, "Since each institution uses different evaluation criteria and tools, international standardization will be an important future task." The institute plans to collaborate with the UK, which first introduced the concept of AI safety, to develop international standards. The UK held the first AI Safety Summit in November 2023, and our AI Safety Research Institute was also the first of its kind established worldwide.

Kim Myung-joo, Director of the Artificial Intelligence Safety Research Institute, is being interviewed on the 9th at the Global R&D Center in Pangyo, Seongnam, Gyeonggi Province. Photo by Kang Jin-hyung

Kim Myung-joo, Director of the Artificial Intelligence Safety Research Institute, is being interviewed on the 9th at the Global R&D Center in Pangyo, Seongnam, Gyeonggi Province. Photo by Kang Jin-hyung

Following this, the United States, Japan, Singapore, and Canada established AI Safety Research Institutes, and South Korea joined the network by opening its institute last November. Kim noted that there is intense competition among countries to take the lead in AI safety. He explained that the positions of the US, which hosts many AI companies, and European countries, which emphasize user protection, are sharply divided. Regarding the Trump administration’s AI safety policies, he predicted, "Although deregulation was the slogan, the US might strengthen safety measures if it benefits the country under its America-first strategy."

Attending AI Summit next month... follow-up work on the Basic Act underway

Kim is fully engaged in preparing for the AI Summit scheduled for June 10-11 in Paris, France. He said, "To leap into the top three AI powers, Korea plans to showcase how it will pursue innovation and inclusiveness," adding, "Since the official name of this summit is the ‘AI Action Summit,’ the European Union is expected to announce practical guidelines for companies to comply with AI laws."

Kim is also working on follow-up measures for the recently passed AI Basic Act in the National Assembly. He said, "We need to establish criteria for ‘advanced AI’ and ‘high-impact AI’ as defined in the AI Basic Act and develop guidelines for companies." He added, "I will act as a ‘Sherpa’ to help companies overcome the entry barriers necessary to provide global AI services."

Kim emphasized, "2025 will be the year when AI becomes fully integrated into daily life," and expressed hope that "as AI literacy matures, citizens will develop awareness of issues and actively voice their opinions on risks."

© The Asia Business Daily(www.asiae.co.kr). All rights reserved.

![Clutching a Stolen Dior Bag, Saying "I Hate Being Poor but Real"... The Grotesque Con of a "Human Knockoff" [Slate]](https://cwcontent.asiae.co.kr/asiaresize/183/2026021902243444107_1771435474.jpg)