Professor Yoo Jaejun's Team at UNIST Presents Innovations in Lightweight Models, Video, and Design at ECCV 2024

The UNIST (President Park Jongrae) Graduate School of Artificial Intelligence, led by Professor Yoo Jaejun, has presented the future of AI technology, from AI model compression to design automation.

Professor Yoo's team presented three papers at the European Conference on Computer Vision (ECCV) 2024, a world-renowned computer vision conference held on June 4. The team achieved innovative results in maximizing AI performance, model compression, and design automation using multimodal AI.

① AI, 323 Times Smaller with No Loss in Performance

Professor Yoo Jaejun's team succeeded in compressing a Generative Adversarial Network (GAN), an image generation AI, by up to 323 times without any degradation in performance. By utilizing knowledge distillation techniques, they demonstrated the possibility of using high-performance AI efficiently even on edge devices or low-power computers without high-end hardware.

Professor Yoo stated, "We have proven that a GAN compressed by 323 times can still generate high-quality images at the existing level," adding, "This opens the door to using high-performance AI on edge computing and low-power devices."

First author Yeo Sangyeop explained, "This will greatly expand the scope of AI applications by making it possible to implement high-performance AI even in resource-limited environments."

The research team introduced the DiME and NICKEL techniques, which increase stability by comparing distributions instead of comparing individual images. For example, if the teacher model generates an image of Kim Taehee, the student model can learn even if it generates images of Song Hyekyo or Jun Jihyun.

The NICKEL technique optimizes the interaction between the generator and classifier, helping lightweight models maintain high performance. By combining these two techniques, the GAN model compressed by 323 times was still able to generate high-quality images at the same level as before.

② Video Generation AI: High-Resolution Videos Without High-Performance Computing Resources

Professor Yoo Jaejun's team developed a hybrid video generation model (HVDM) that can efficiently generate high-resolution videos even in environments lacking high-performance computing resources. HVDM combines 2D triplane representation with 3D wavelet transforms, enabling it to process both the global context and fine details of videos simultaneously.

While conventional video generation models relied on high-performance computing resources to generate high-resolution videos, HVDM succeeded in producing natural and high-quality videos with limited resources, overcoming the limitations of CNN-based autoencoder methods.

The research team demonstrated the excellence of HVDM using video benchmark datasets such as UCF-101, SkyTimelapse, and TaiChi. HVDM outperformed existing technologies in video quality, showing outstanding performance in natural video flow and realistic details.

Professor Yoo commented, "HVDM is a groundbreaking model that can efficiently generate high-resolution videos even when high-performance computing resources are limited," adding, "It is expected to be widely used in industrial fields such as video production and simulation."

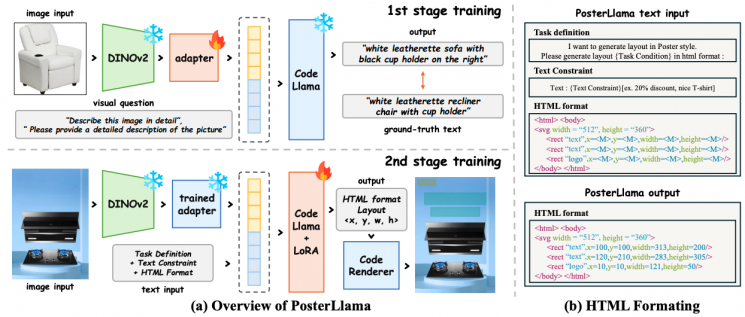

③ Web-UI Design AI: Instantly Create Advertising Posters!

The research team also developed a multimodal layout generation model that can automatically create advertising banners and web UI designs even with a small amount of data. This model processes images and text simultaneously, automatically generating appropriate layouts based on user input alone.

Previous models struggled to fully process text and image information due to data scarcity. The newly developed model overcomes this issue, greatly improving the practicality of advertising design and web UI. By maximizing the interaction between text and images, it automatically generates optimized designs that reflect both visual elements and text.

The research team converted layout information into HTML code format. By maximizing the use of pretrained language model data, they established an automatic generation pipeline that delivers excellent performance even with limited data. Benchmark testing showed up to a 2800% improvement in performance.

During pretraining, the team utilized image caption datasets and combined Depth-Map and ControlNet techniques to maximize performance through data augmentation. The quality of layout generation was greatly improved, and distortions that could occur during data preprocessing were reduced, resulting in more natural designs.

Professor Yoo emphasized, "With only about 5,000 data samples, our model outperformed previous models that required more than 60,000 samples," adding, "This innovation will make advertising banner and web UI design automation much more accessible, not only to experts but also to general users."

The research was supported by the National Research Foundation of Korea (NRF), the Ministry of Science and ICT (MSIT), the Institute of Information & Communications Technology Planning & Evaluation (IITP), and UNIST. The results are expected to further expand the potential for AI applications across various industries, maximizing both performance and efficiency.

© The Asia Business Daily(www.asiae.co.kr). All rights reserved.