Voice interaction with users through Q&A

Image recognition to provide context-appropriate solutions

Generative artificial intelligence (AI) ChatGPT has evolved to be able to converse with people through voice and answer questions by looking at images.

Unlike other voice AI assistants, ChatGPT is 'conversational'

On the 25th (local time), OpenAI announced that ChatGPT will soon offer new features that allow it to 'see, hear, and speak.'

The 'listening and speaking feature' enables users to exchange questions and answers through voice. Until now, conversations were conducted via prompts, but now voice conversations will be possible.

This is similar to existing AI assistants such as Amazon's Alexa, Apple's Siri, and Google Assistant.

However, while these AI assistants mainly focus on executing users' voice commands, ChatGPT is capable of having a conversation.

When a user asks a question by voice, ChatGPT converts it into text, sends it to the large language model (LLM), receives an answer, and then converts it back into voice to respond.

ChatGPT's voice will be offered in five different styles, and users can choose one to use.

OpenAI also explained that it is considering collaborating with Spotify, the world's largest music streaming service, to enable translation into other languages while maintaining the original voice.

OpenAI stated that this feature will be available to ChatGPT paid subscribers within two weeks and will later be accessible to everyone.

The voice feature will be limited to iOS and Android apps.

Recognizes images and answers users' questions

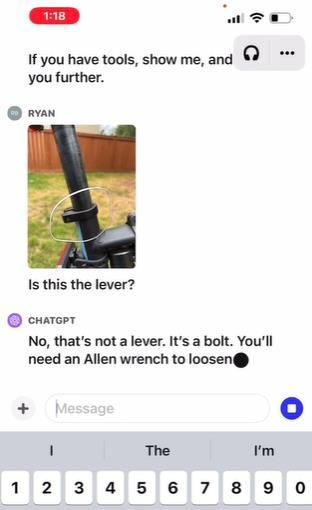

Implementation scene of ChatGPT's image recognition feature released by OpenAI. [Image source=Captured from OpenAI homepage]

Implementation scene of ChatGPT's image recognition feature released by OpenAI. [Image source=Captured from OpenAI homepage]

The 'seeing and answering feature' allows users to upload images and ask questions based on those images, and ChatGPT responds by analyzing the images.

For example, a user can upload a photo of pink sunglasses and request suggestions for matching clothes, or upload a photo of a math problem and ask for a solution.

In the image recognition feature revealed by OpenAI, when a user uploaded a bicycle image and asked how to lower the seat height, ChatGPT provided a general answer on how to adjust the seat height.

However, when the user circled the bicycle seat clamp and asked for help, ChatGPT recognized the type of bolt and informed that a hex wrench was needed.

It also checks whether the correct size wrench is available by looking at photos of the user manual and toolbox.

This feature is expected to be available to paid subscribers and enterprise users within a few weeks. The image processing feature will be available on all platforms.

OpenAI said, "Our goal is to build safe and beneficial AGI (Artificial General Intelligence)," adding, "Gradually providing new tools allows us to improve functionality and mitigate risks, preparing everyone to use more powerful systems in the future."

"Although 'synthetic voice' sounds natural, there is potential for criminal misuse"… Experts express concerns

However, experts are concerned that the AI-generated synthetic voice in this voice recognition update could be misused for criminal purposes.

While synthetic voices can provide users with a more natural experience, they could also enable more convincing deepfakes (technology that uses AI to make something appear real).

As a result, researchers have already begun studying how deepfakes could be used to infiltrate cybersecurity systems.

Regarding this, OpenAI emphasized, "ChatGPT's synthetic voice was created through voice actors we directly worked with, not collected from strangers."

However, OpenAI has not disclosed how it will use ChatGPT users' voice inputs or how it will protect that data.

The company's terms of service state that consumers own their input data to the extent permitted by applicable law.

© The Asia Business Daily(www.asiae.co.kr). All rights reserved.